An AI agent is only as useful as the workflow it's built into.

Add capacity without adding headcount

Purpose-built agents that work inside your real workflows

The technology behind AI agents has matured rapidly. What hasn’t matured as quickly is the thinking around how to deploy them — where they genuinely add value, what role they should play, and how to integrate them into an organisation in a way that actually holds up under real operating conditions. Agents work best when the workflow is already sound.

Most AI agent implementations fail quietly. The agent gets deployed, nobody is quite sure what it’s supposed to do, it produces outputs nobody acts on, and it gets quietly shelved six months later. The technology worked fine. The design didn’t.

Changeable designs and deploys AI agents that are built around your actual workflows, your actual data, and the actual decisions your organisation needs to make. We define the role before we build the agent — because a well-defined role is what separates a useful agent from an expensive experiment.

What an AI agent actually is — and isn't

The term ‘AI agent’ is used loosely enough that it’s worth being direct about what we mean.

An AI agent is a system designed to perform a specific, ongoing function within a workflow. Unlike a chatbot that responds to prompts, or an automation that runs a fixed sequence of steps, an agent can interpret context, make decisions within defined parameters, take actions across connected tools and systems, and hand off to humans or other agents at the right moments.

That capability makes agents genuinely powerful for complex, variable workflows — the kind where the work changes depending on what comes in, where judgment calls are required, and where coordination across multiple systems is part of the job.

What agents aren’t: a replacement for clear thinking about what the agent is supposed to achieve. An agent with a vague brief produces vague, inconsistent outputs. The discipline of defining the role — its scope, its decision boundaries, its escalation triggers, its success criteria — is what most implementations skip and what we always do first.

Types of agents we design and deploy

We build purpose-built agents matched to specific operational functions. The most common agent types we deploy for NZ organisations:

Research and intelligence agents

Agents that monitor sources, scan documents, aggregate information, and surface relevant findings to the right people at the right time. Useful for competitive intelligence, regulatory monitoring, knowledge management, and keeping leadership across fast-moving information environments without drowning in volume.

Document and data processing agents

Agents that read, classify, extract, and act on unstructured documents — contracts, forms, reports, emails, submissions. These handle the intake and routing work that currently consumes significant staff time, while maintaining a consistent, auditable record of every action taken.

Customer and stakeholder communication agents

Agents that handle first-response communications, triage incoming requests, provide status updates, and escalate to humans when the query requires judgment or relationship sensitivity. Designed with appropriate human review checkpoints and tone guardrails to ensure every interaction reflects your organisation correctly.

Operational decision-support agents

Agents that monitor workflows, flag exceptions, surface anomalies, and prompt the right person to make a decision at the right moment. These sit inside existing operational processes rather than replacing them — they make the process more responsive without removing human oversight from decisions that require it.

Internal knowledge and process agents

Agents that give staff fast, accurate access to internal knowledge — policies, procedures, precedents, past decisions — without requiring them to search manually or ask a colleague. Particularly valuable in organisations where institutional knowledge is concentrated in a few people or scattered across multiple systems.

Multi-agent orchestration

For more complex operational needs, we design coordinated agent systems where multiple agents with distinct roles work together — passing context, dividing tasks, and escalating appropriately. This is the architecture that enables genuinely sophisticated automation across complex, multi-step workflows.

How we design and deply your agents

Agent deployment without a rigorous design process is how organisations end up with agents that nobody trusts and nobody uses. Our four-phase methodology ensures every agent we deploy has a clear purpose, a defined operating boundary, and a measurable performance standard from day one.

Phase 01

Use case discovery and role definition

We start by identifying where agents can add genuine value in your organisation — not where the technology is interesting, but where the operational need is real and the workflow is suited to agentic design. This involves analysing your current workflows, understanding the decisions that need to be made, and mapping the data and systems the agent will need access to.

Every agent gets a defined role brief before any building starts: what the agent does, what it doesn’t do, what decisions it can make autonomously, what must always go to a human, how its performance will be measured, and what happens when it encounters something outside its parameters.

Phase 02

Architecture and governance design

Before an agent is built, the governance structure around it needs to be clear. This means defining data access and permissions, establishing the human-in-the-loop checkpoints appropriate to the risk level of the agent’s actions, setting up logging and audit trail requirements, and designing the escalation path for exceptions.

For public sector clients and regulated industries, this phase includes explicit alignment with the Privacy Act 2020, the Algorithm Charter (where applicable), and any sector-specific accountability requirements. An agent making or influencing decisions that affect people needs to be accountable — and that accountability needs to be designed in, not retrofitted.

Phase 03

Build, integration, and testing

We build the agent against its defined role brief and integrate it into your existing systems and workflows. Testing happens on real work — not synthetic scenarios — before any agent goes live. We test for accuracy, consistency, edge case handling, and appropriate escalation behaviour.

Platform and tooling selection is driven by your environment, your team’s capacity to maintain the agent, and the specific requirements of the use case. We work with a range of agent frameworks and orchestration platforms and make recommendations based on fit, not preference.

Phase 04

Deployment, monitoring, and evolution

A deployed agent is not a finished project. Agent performance needs to be monitored, outputs need to be reviewed against quality standards, and the agent’s behaviour needs to be refined as it encounters real-world variation. We provide a structured handover that includes performance metrics, a review cadence, and a clear process for updating the agent as your requirements evolve.

We also provide staff orientation — making sure the people working alongside the agent understand what it does, where it makes decisions, where it escalates, and how to interact with it effectively. Agents that staff don’t understand don’t get used.

Governance is built in, not bolted on

An AI agent making decisions inside your organisation is an accountability question as much as a technology question. Every agent we design includes explicit governance provisions:

- Defined decision boundaries — what the agent can decide autonomously vs what always requires human sign-off

- Full audit logging — every action the agent takes is recorded and retrievable

- Escalation triggers — clear rules for when the agent hands off to a human and how that handoff works

- Data access controls — the agent accesses only what it needs to perform its function

- Performance review schedule — regular review of agent outputs against quality and accuracy standards

- Off-switch and override process — a clear, tested procedure for pausing or overriding the agent if needed

This governance structure is particularly important for public sector and regulated industry clients, where agent behaviour may be subject to OIA requests, audit, or regulatory scrutiny. Governance is built in from the start — not bolted on later.

What you receive?

Every AI Agents engagement produces a complete, documented deployment:

- A use case assessment documenting where agents fit in your workflows and the expected value of each

- A role brief for each agent defining scope, decision boundaries, escalation triggers, and success criteria

- A governance and data access design aligned to your sector’s requirements

- One or more agents fully built, integrated, and tested against real workflows

- Staff orientation materials explaining how to work effectively alongside the agents

- Performance metrics and a monitoring approach so you can see the value being delivered

- A roadmap for evolving or extending the agent capability over time

Engagement scope varies based on the number of agents being deployed and the complexity of the workflows involved. A single, well-defined agent can be delivered in three to four weeks. Multi-agent systems and enterprise deployments typically run six to ten weeks.

Who this is for

SMBs that need leverage without growing headcount

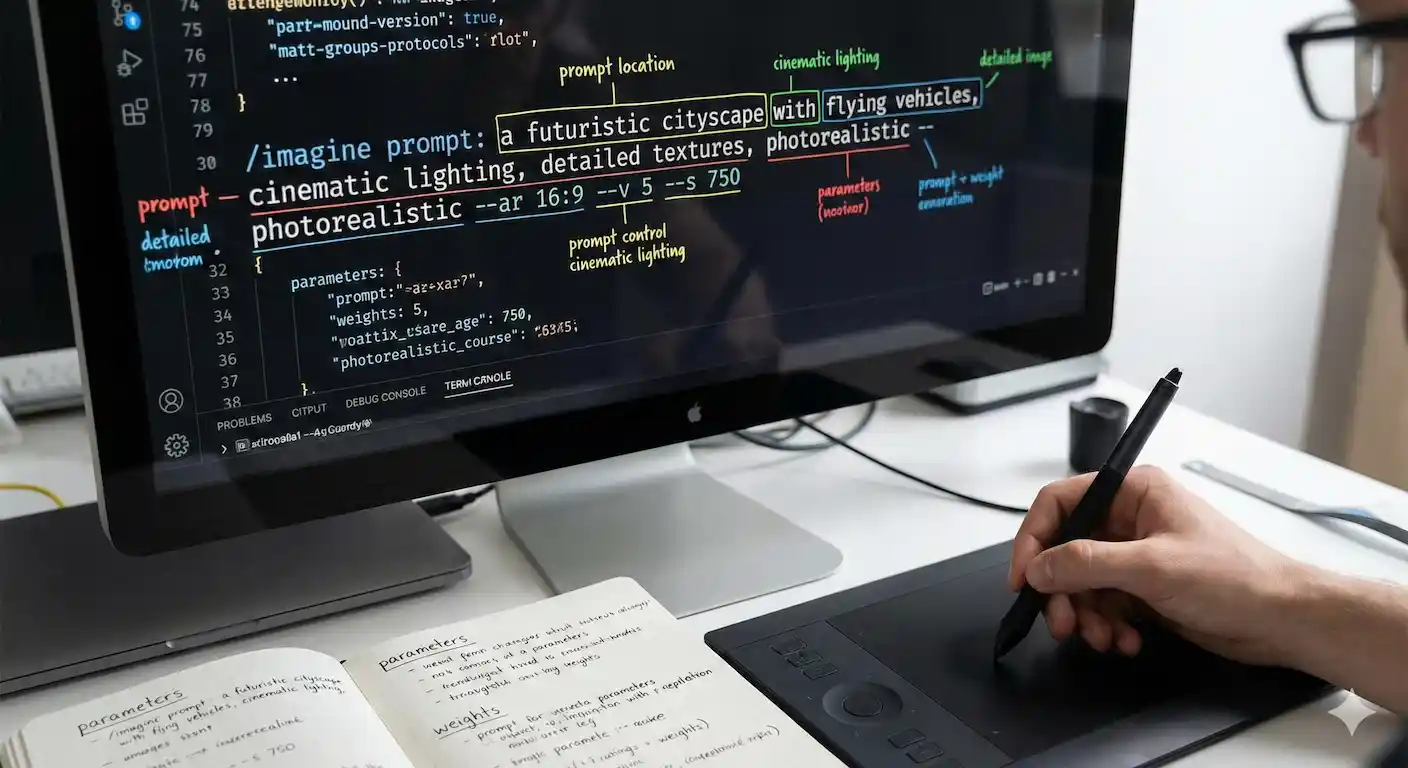

Small and medium businesses often hit a capacity ceiling where the volume of work that needs to happen exceeds what the team can manage without either hiring or dropping quality. A well-designed agent targeted at your highest-volume, most repetitive operational work can release significant capacity without the overhead of a new employee. The key is starting with a clearly defined, bounded use case — not trying to automate everything at once. If you’re new to AI agents, watch how a practitioner builds their own AI assistant.

Councils and public sector organisations

Public sector organisations deal with high volumes of structured and unstructured information — submissions, requests, correspondence, compliance checks, reporting — much of which requires consistent, auditable handling. Agents designed for these workflows can dramatically improve throughput and consistency while maintaining the accountability standards that public sector work demands. We build public sector agents to a governance standard that survives OIA scrutiny and audit.

Enterprises building agentic capability across teams

Larger organisations are increasingly moving beyond individual AI tools toward coordinated agent systems that work across departments. This requires a different design approach — one that considers how agents hand off to each other, how governance is maintained across a complex system, and how the whole architecture is maintained and evolved over time. We have the business analysis and systems design capability to approach this at enterprise scale.

Organisations that have tried AI tools and hit the ceiling

If your organisation has been using AI tools — copilots, chatbots, standalone AI assistants — and found that they deliver value in limited contexts but don’t connect to your real operational workflows, you’re at the natural ceiling of general-purpose tools. Custom agents are what comes next: purpose-built, integrated into your systems, and designed around the specific decisions and workflows your organisation actually runs.

Have a question about AI Agents?

What is an AI agent and how is it different from a chatbot?

A chatbot responds to prompts — it’s reactive and conversational. An AI agent is designed to perform an ongoing operational function: it interprets context, makes decisions within defined parameters, takes actions across connected systems, and hands off to humans or other agents at appropriate points. An agent doesn’t wait to be asked — it operates continuously within its defined workflow. The distinction matters because agents can handle complex, multi-step work that chatbots can’t, but they also require more rigorous design and governance.

How is an AI agent different from workflow automation?

Workflow automation follows fixed rules: if X, do Y. It’s excellent for structured, predictable tasks. An AI agent can handle variability — it can interpret unstructured inputs, make judgment calls within defined boundaries, and adapt its response based on context. In practice, most effective deployments combine both: automation for the rules-based parts of a workflow, agents for the parts that require interpretation or variable decision-making. We design the right architecture for each use case rather than defaulting to one approach.

Do AI agents replace staff?

Our design philosophy is explicit on this: agents should augment human capability, not replace it. The agents we deploy are designed to handle the high-volume, repetitive, or data-intensive work that currently consumes staff time without requiring the judgment, relationships, or contextual knowledge that make people valuable. The freed capacity gets redirected to higher-value work. We build this into the design brief for every engagement and include change communication guidance to ensure staff understand how the agent changes their role.

How do you ensure agents behave safely and consistently?

Every agent we deploy has a defined operating boundary — what it can do autonomously and what must always go to a human. Audit logging is built in from the start so every action is traceable. We test extensively on real data before deployment, including deliberately testing edge cases and failure modes. We establish a performance review cadence so output quality is monitored over time. And we design a clear override and off-switch procedure so there’s never ambiguity about how to pause or correct the agent if something unexpected happens.

What systems and tools do agents integrate with?

Agents integrate with whatever systems are relevant to the workflow — CRM, document management, email, communication platforms, internal databases, cloud storage, APIs. Platform selection is driven by your existing environment and what will be maintainable by your team. We are platform-agnostic and work with a range of agent frameworks and orchestration tools. However, our preference is built on Anthropic’s Claude — one of the most capable and safe AI models available, combined with n8n for agent workflow orchestration

Can agents be built for regulated or public sector environments?

Yes, and this is an area we have specific experience in. Public sector and regulated industry deployments require additional governance provisions — explicit alignment with the Privacy Act 2020, audit trail design that satisfies OIA and regulatory requirements, human oversight at the appropriate decision points, and documentation that can be reviewed by a governance committee or auditor. We build to these standards by default for public sector clients.

How long does it take to deploy an agent?

A single, well-defined agent in a clearly understood workflow can be designed, built, and deployed in three to four weeks. More complex agents, or multi-agent systems, typically take six to ten weeks. The biggest variable is the quality of the use case definition at the start — organisations that can clearly articulate what the agent needs to do and what data it needs access to move significantly faster than those where that work still needs to be done.

What happens after the agent is deployed?

Deployment is the start of the agent’s operational life, not the end of the engagement. We provide a structured handover including staff orientation, performance metrics, a monitoring approach, and a review schedule. We also provide ongoing advisory support for agent evolution as your requirements change — adding new capabilities, adjusting decision boundaries, or extending the agent to adjacent workflows.